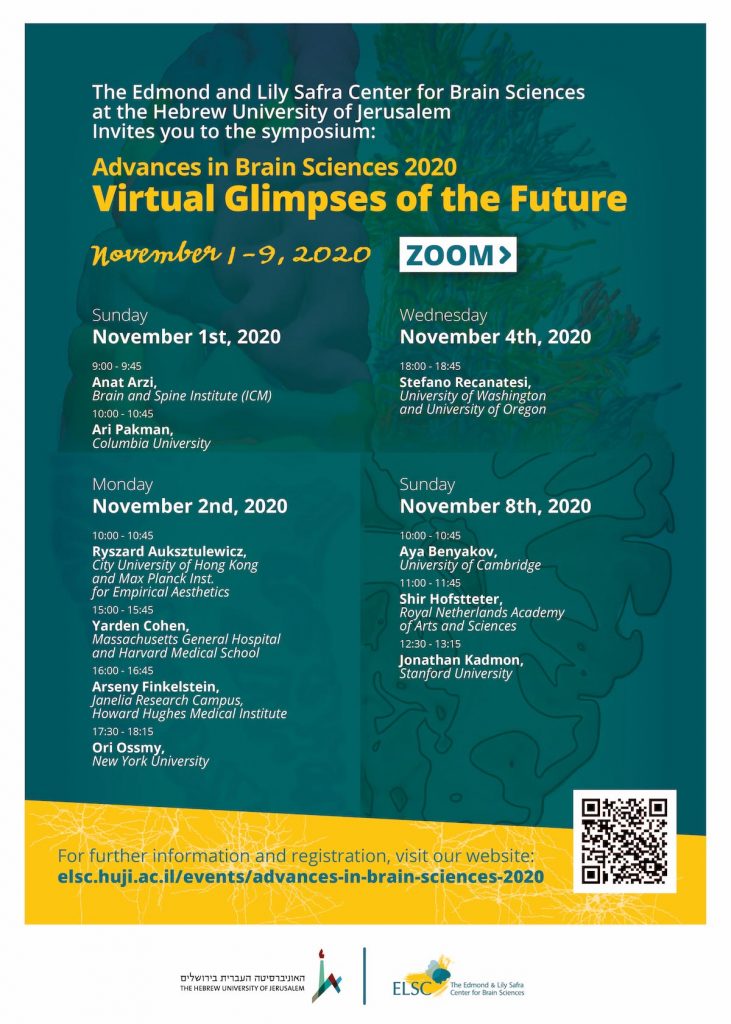

Virtual Glimpses of the future

November 1-9 2020

On ZOOM

Sunday, November 1st, 2020

09:00-09:45- Anat Arazi

Brain and spine institute (ICM)

Deciphering the world under loss of consciousness: from natural sleep to pathological unconsciousness

Within a single day, we undergo behavioral, physiological and neurochemical changes, from vigilant wakefulness to unconscious sleep. Yet, despite the loss of consciousness during sleep, some sensory processing continues. What are the principles governing whether, when and which information will be processed while we are unconscious? I will present a series of studies in which I investigated sensory and cognitive processing during unconsciousness in healthy populations and neurological patients by employing different sensory modalities, physiology, electrophysiology and neuroimaging. First, combining simultaneous full-night sleep EEG and nap EEG-fMRI recordings, we discovered stimulus-conscious state interactions throughout the auditory processing time course. These findings indicate a dynamic reorganization of auditory information across the sleep-wake cycle. Second, extending from sensory processing to cognition, we demonstrated that humans can learn entirely new associations during sleep and that sleep-learning is supported by an interplay between slow-waves, sigma and theta activity. These findings suggest that timely modulation of these brain rhythms may determine the acquisition and storage of new memories during sleep. Third, expanding my investigation to additional states of altered consciousness I turned to the puzzling state of brain-injured patients with disorders of consciousness. We revealed that olfactory sniff-responses predicted regaining of consciousness and long-term survival at the single patient level, providing an accessible bedside tool that signals recovery in patients with severe brain injuries. Altogether, these findings demonstrate an intricate relation between conscious state and the ability to process the richness of the external world and offer a novel approach for the detection of consciousness recovery.

ZOOM LINK

10:00-10:45- Ari Pakman

Columbia University

Bayesian Methods for Statistical Neuroscience

Novel experimental techniques (e.g. optogenetics, calcium imaging, high-density multi-electrode arrays) are producing massive amounts of data, creating new challenges and opportunities for statistical neuroscience. I will present several novel statistics and machine learning computational tools motivated by neuroscience problems, in the context of two major Bayesian inference paradigms: Markov chain Monte Carlo and amortization via deep learning. The usefulness of these techniques will be illustrated in experimental settings ranging from finding synaptic locations in dendrites, classifying neural types using genetic markers and spike-sorting in multi-electrode arrays.

Monday, November 2nd, 2020

10:00-10:45- Ryszard Auksztulewicz

City University of Hong Kong and Max Planck Inst. For Empirical Aesthetics

Neural mechanisms of predictive processing across sensory features and cognitive demands

The brain is thought to generate internal predictions to optimise behaviour. However, it is unclear to what extent these predictions are modulated by other top-down factors such as attention and task demands, and whether predictions of different sensory features are mediated by the same neural mechanisms. In this talk I will present results of several studies combining human and rodent electrophysiology with computational modelling to identify the neural mechanisms of sensory predictions and their interactions with other cognitive factors.

First, using magnetoencephalography (MEG) and dynamic causal modelling (DCM), sensory predictions and temporal attention were orthogonally manipulated in an auditory mismatch paradigm, revealing interactive effects on evoked response amplitude. This interaction effect was modelled in a canonical microcircuit using DCM. While mismatch responses were explained by recursive interplay of sensory predictions and prediction errors, their attentional modulation was linked to increased early sensory gain.

Second, I will present a study containing independent manipulations of the spatial/temporal predictability of visual targets, and the relevance of spatial/temporal information provided by auditory cues. Relevance modulated the influence of predictability on task performance. To explain these effects, participants’ predictions were estimated using a Hierarchical Gaussian Filter (HGF). Model-based time-series of predictions and prediction errors were linked to dissociable induced activity measured with MEG. Predictions correlated with beta-band activity, while prediction errors were signalled by increased gamma and decreased alpha-band activity. Crucially, these oscillatory correlates were modulated by task relevance, suggesting that current goals influence prediction signalling.

Finally, I will present an analysis of electrocorticographic data recorded from patients performing a task orthogonally manipulating “what” and “when” predictability of auditory targets. The two predictability types modulated evoked responses in different cortical regions and at dissociable latencies. DCM served to disambiguate between models of stimulus predictability in terms of top-down processing and gain modulation: “what” predictability increased auditory short-term plasticity, while “when” predictability increased putative synaptic gain in motor areas. This suggests that distinct predictions are mediated by qualitatively different neural mechanisms. Further insights into the diversity of neural signatures of prediction errors will be discussed in the context of electrophysiological recordings from the rat auditory cortex, and a homologous study in human volunteers.

ZOOM LINK

15:00-15:45- Yarden Cohen

Massachusetts General Hospital and Harvard Medical School

Hidden neural states underlie canary song syntax

Songbirds are outstanding models of motor sequence generation, but commonly-studied species do not share the long-range correlations of human behavior – skills like speech where sequences of actions follow syntactic rules in which transitions between elements depend on the identity and order of past actions. To support long-range correlations, the ‘many-to-one’ hypothesis suggests that redundant premotor neural activity patterns, called ‘hidden states’, carry short-term memory of preceding actions.

To test this hypothesis, we recorded from the premotor nucleus HVC in a rarely-studied species-canaries – whose complex sequences of song syllables follow long-range syntax rules, spanning several seconds.

In song sequences spanning up to four seconds, we found neurons whose activity depends on the identity of previous, or upcoming transitions – reflecting hidden states encoding song context beyond ongoing behavior and demonstrating a deep many-to-one mapping between HVC states and song syllables. We find that context-dependent activity correlates more often with the song’s past than its future, occurs selectively in history-dependent transitions, and also encodes timing information. Together, these findings reveal a novel pattern of neural dynamics that can support structured, context-dependent song transitions and validate predictions of syntax generation by hidden neural states in a complex singer.

ZOOM LINK

16:00-16:45- Arseny Finkelstein

Janelia Research Campus, Howard Hughes Medical Institute

Mechanisms of cortical communication during decision-making

Regulation of information flow in the brain is critical for many forms of behavior. In the first part of my talk, I will focus on mechanisms that regulate interactions between brain regions and describe how information flow from the sensory cortex can be gated by state-dependent frontal cortex dynamics during decision-making in mice. In the second part, I will focus on information flow within the frontal cortex microcircuitry and present a new optical method that I developed for rapid mapping of local connectivity in vivo. Combining connectivity mapping with a novel paradigm of mouse innate behavior revealed a columnar structure in the frontal cortex and the existence of neurons that function as network hubs, which had an unexpectedly high number of connections and strong influence on neighboring neurons. Finally, I will show that analyses of interactions between >20,000 neurons, recorded simultaneously across multiple cortical areas, revealed a hitherto unknown parcellation of the mouse cortex into functional modules, calling for revision of existing definitions of cortical regions.

ZOOM LINK

17:30-18:15- Ori Ossmy

New York University

From macro to micro: Real-time processes in the development of behavioral problem solving

Behavioral problem solving is ubiquitous across every age and culture—how to navigate a cluttered environment, use a tool, and so on. As our bodies, skills, and environments change, new problems emerge and require new means to solve them. With learning and development, children respond more adaptively and efficiently to environmental challenges and opportunities. Traditionally, developmental research focuses on macro changes in problem solving skills by identifying the ages at which children solve various problems and characterizing differences among children at different points in learning or development. This outcome-oriented approach established that behavioral problem solving begins in infancy and improves with age and experience, but researchers can only speculate about how and why change occurs. In contrast, my ground hypothesis is that macro changes in problem solving emerge from micro, real-time experiences. These real-time experiences, in turn, play out in an interactive system of perceptual, neural, cognitive, and motor processes. The efficiency of these processes and their interactions differ widely among individuals. From cruising infants to soccer-playing robots, I test this hypothesis by adopting an innovative integrative approach that combines interdisciplinary perspectives (child development, cognitive neuroscience, motor control, computer science), methods (eye-tracking, EEG, motion tracking, robotics, computer vision, virtual reality, and video), ages (infants, children, adults), and tasks (manual and locomotor).

ZOOM LINK

Wednesday, November 4th, 2020

18:00-18:45- Stefano Recanatesi

University of Washington and University of Oregon

Constraints on neural dimensionality: a mesoscale approach linking learning to complex behavior

The link between behavior, learning and the underlying connectome is a fundamental open problem in neuroscience. In my talk I will show how it is possible to develop a theory that bridges across these three levels (animal behavior, learning and network connectivity) based on the geometrical properties of neural activity. The central tool in my approach is the dimensionality of neural activity. I will link animal complex behavior to the geometry of neural representations, specifically their dimensionality; I will then show how learning shapes changes in such geometrical properties and how local connectivity properties can further regulate them. As a result, I will explain how the complexity of neural representations emerges from both behavioral demands (top-down approach) and learning or connectivity features (bottom-up approach). I will build these results regarding neural dynamics and representations starting from the analysis of neural recordings, by means of theoretical and computational tools that blend dynamical systems, artificial intelligence and statistical physics approaches.

Sunday, November 8th, 2020

10:00-10:45- Aya Benyakov

University of Cambridge

When and what of episodic encoding

In striving for experimental control, studies of human episodic memory have focused mainly on encoding of brief, stationary events. Such events, while providing a high degree of control, bear little resemblance to real-life memory and constrain the questions that can be asked. I will demonstrate how use of naturalistic stimuli enables us to address previously unaskable questions, discussing a set of fMRI studies in which we asked when episodic memories are formed. Using film clips as a proxy for real-life memory, we found that hippocampal activity time-locked to the offset of events, but not their onset or duration, is linked to subsequent memory. In a subsequent study we analysed brain activity of over 200 participants who viewed a naturalistic film and found that the hippocampus responded both reliably and specifically to shifts between scenes. Taken together, these results suggest that during encoding of a continuous experience, event boundaries drive hippocampal processing, potentially reflecting the encoding of bound representations to long-term memory. I will discuss how this surprising finding opened a new avenue of research, asking what is encoded in episodic encoding – is each element encoded independently, or is the entire episode encoded as a cohesive unit?

ZOOM LINK

11:00-11:45- Shir Hofstteter

Royal Netherlands Academy of Arts and Sciences

Properties and constraints of cognitive topographic maps in the human brain

Topographic maps are a key organization principle of primary motor and sensory cortices. Recently, studies extended the notion of topography from the sensory cortices to the association cortices, uncovering dedicated topographic maps for cognitive dimensions such as visual numerosity, visual object size and visual time duration. We further questioned the topographic organizational principles underlying – some of- our cognitive abilities: First, we asked whether topographic maps of cognitive dimensions either depend on the driving sensory modality or represent cognitive dimensions irrespective of the driving sensory modality. We investigated this question using numerosity-selective population receptive field (pRF) models, ultra-high field (7T) fMRI and haptic and visual numerosity stimuli. We provide the first evidence of a distinct network of haptic topographic numerosity maps and propose that overlapping sensory-specific topographic maps facilitate supramodal cognitive perception; Second, we asked to what extent are topographic maps constrained by development, and whether maps can represent a new sensory experience learned in adulthood. To that end, we investigated ‘retinotopic’ mapping in MM, a congenitally blind subject, using a set of tools that included a visual-to-auditory sensory substitution device and pRF modeling. We found topographic maps dedicated to each dimension of the learned space. Overall, the two studies support the view that higher cognitive dimensions may be organized in topographic maps, and that cortical maps can be adapted or “recycled” in adulthood to represent novel sensory experiences.

ZOOM LINK

12:30-13:45- Jonathan Kadmon

Stanford University

Robust neural computation in the face of noise: from decoding and data analysis to perception and brain communication

Neuronal networks face a formidable task: performing reliable computations in the face of intrinsic stochasticity in individual neurons, external nuisance inputs, imprecisely specified connectivity, and nonnegligible delays in synaptic transmission. Overcoming such difficulties in distributed sensorimotor circuits can be essential for animal survival. Large circuits can overcome widespread noise by distributing the computation across many neurons, allowing uncorrelated noise to be averaged out, suggesting the expected error falls as the square-root of the number of neurons. A recent theory for predictive coding in spiking neurons demonstrated that the error could decay linearly with the size when neurons cooperate through recurrent connectivity, enabling high-fidelity encoding. However, this theory breaks down in the presence of small time-delays in synaptic and axonal transmission, and its robustness to noise and synaptic heterogeneity remains poorly understood. I will introduce a general framework for predictive coding through a synaptic balance. Here, high synaptic efficacy results in a dynamic cancelation of feedback and feedforward currents, leaving a residual proportional to the prediction error. By varying the synaptic balance, the network can adapt to different conditions of noise, heterogeneity, and delays through an optimal balance that minimizes error. Interestingly, an emergent property of a near-optimal coding is the appearance of global network oscillations, which are prominently observed in the brain.

In the second part of the talk, I will address the difficulties of extracting insights from data when the underlying activity is dominated by noise. Tensor Component Analysis (TCA) is a dimensionality reduction method that can extract meaningful patterns from multi-modal data. However, current TCA algorithms fail when the signal-to-noise ratio (SNR) in the data is low. To adapt the method to the analysis of circuits with noisy neurons, I develop and analyze a new TCA algorithm that successfully performs Bayesian inference on arbitrary tensors at low SNR. This method not only yields superior algorithms but also estimates the data amounts and SNR required for success.

Lastly, I will present theory-experiment collaborations in which we study the robustness of cortical circuits to noise. First, we demonstrate that the visual cortex is as sensitive as it can be to external inputs, without spontaneous activity triggering false-positive percepts. Second, we show that communication between cortex and cerebellum requires a plastic denoising step by the basal pontine nuclei, yielding a reliable sparse representation of motor behavior in granule cells. Overall, this body of work demonstrates how theory can provide fundamental insights into the robustness of neural computation, the development of robust data analysis algorithms, and insights into experimental data.

ZOOM LINK